2. Reducing Scope – SDLC Strategies

I started this series with a high level overview on Reducing Scope. The first group I listed is SDLC Strategies. The entire SDLC is often not under the scope of IT, but under business of product management. The challenge can be explaining the impact of certain strategies on the SDLC to non IT focussed people. Often the Gap Between IT and business can be bridged with a little explanation as to how certain choices in product Strategy can effect SDLC costs, be these choices architectural or simply strategic. It does however, takes a certain organization culture to do this effectively.

“Corporate Mandate”

Ask anyone in QA, development, information security etc what their corporate mandate is, and you usually get an answer “Zero Incidents”, or 100% defect free. Not only is this not possible, but often not really something management expects. The risk associated with the application, should determine the level of effort and amount of testing required. A free web services of upcoming events, a free blog such as this, or information service, my not need to same Testing effort as an on-line payments, financial calculation or personal information service. In the first example, you may need to do only a single test for functional compliance, but the the second, perhaps you decide to do a boundary test at the upper limit and lower limit and then perhaps a negative scenario as well. You may also have additional testing in security and integration and far more regression or continuous testing needs. This is why defining some criteria on how percentage coverage can be helpful. In a low risk application, perhaps the business owner will accept 30% percentage coverage. In a high risk application, perhaps the business owner will express the need to to have 100% or greater coverage.

A second aspect is how long is this application and release expected be in production. If new releases are made quarterly, or the application has some short life-cycle, perhaps a lower level of testing is warranted. If however you developing a API that is to be used corporately by multiple applications and clients for next 5 -10 years, you may want to test that API more thoroughly as issues could replicate across your organization and all clients may not as of yet event exist. More in architecture on this subject.

Lastly I wanted to mention the aspect of customer expectation. If I am using a Google labs application for free, my expectation as a customer who finds a defect, may be very different from finding one on my tax return application. A young start-up company showing more proof of concept, may find its customers more tolerant of a defect than mature banking application. A free Blog more tolerant of my poor proof reading, than a paid subscription.

Architecture

I have written much about the need for web services to be both Robust and Sustainable. In the data economy, mobile and the very structure of web services has changed. Much of what falls under architecture today is strategies around embracing device or client diversity. Developing, Testing and maintaining the life-cycle of the API (Web Service) independently to that of the current or future clients. I have written before on costs and management of that API and client relationship.

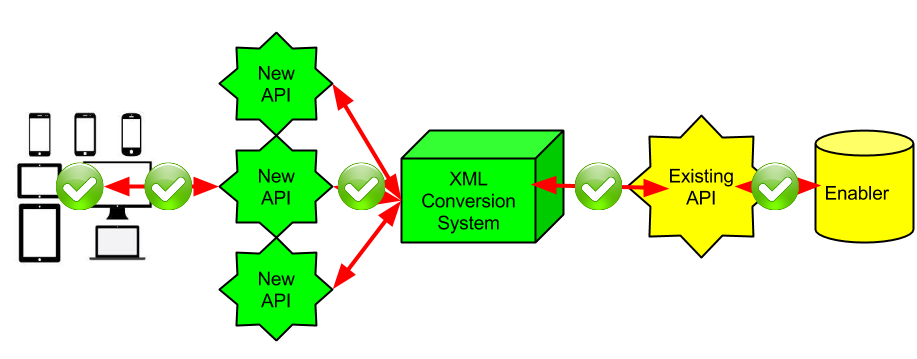

Many of our customers are using some form of intermediary now to create alternate versions or structured API, in new formats like size, structure, version, language or Identity formats. Creating multiple variations of API that relying on the same back-ends. Using these intermediaries to support device or client diversity.

With new API and code comes increased testing requirements and potential quality issues. Looking at the green check marks, do you need to test at each of these locations? If there is a problem identified, how does one trouble shoot it? How can Developers or Testers know the service is working when a client may not even exist yet. There is a need to be sure that the points behind the XML Conversion system are not changed and thoroughly tested and carefully placed under continuous regression testing before moving left in the diagram above, yet customer experience is seen to be only the left most check box. Understanding exactly how the message structure is altered as it transverses between the client and the end server can be difficult, as the rules for XML conversion can be scripted in entirely different language created by the vendor of the XML conversion system.

Conclusion

Although it may not fall under the realm of Tester, to decide on corporate strategy, it is the testers obligation to use their knowledge of testing to explain the impact that one strategy has on quality and testing needs vs. another. What makes this more challenging, is that changing architectures brings the need for new skills, and often higher defect density. Making previous KPI and processes become redundant and making any estimation of the amount of testing required much more complicated.

As always, Comments?