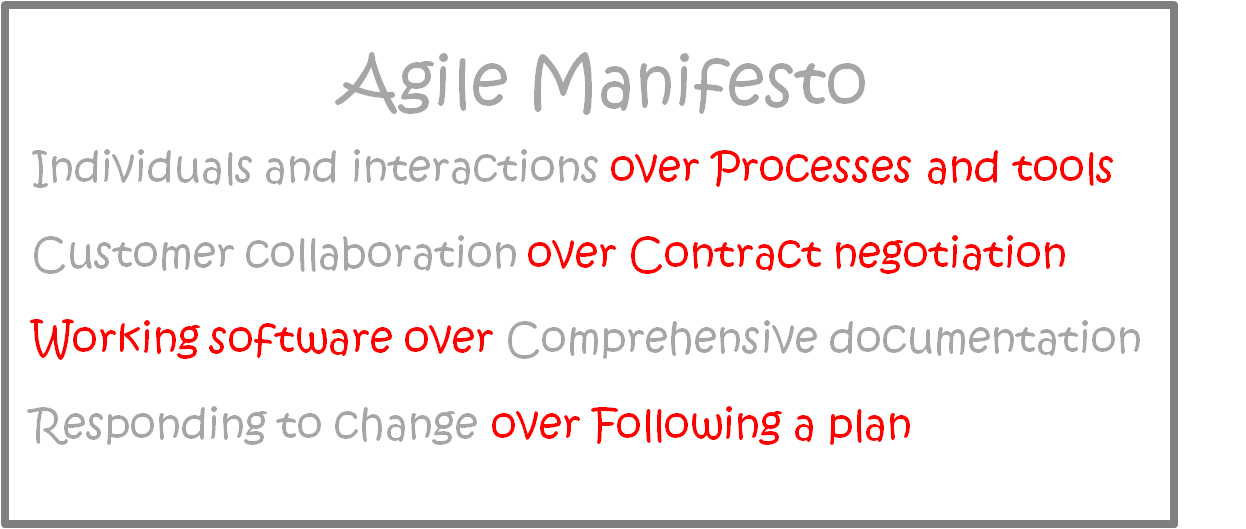

Adding to the costing models in the series for Agile Anarchy Costing, Backing In Costing and Service Planning Costing, Just Test Something is one of the more common. The intent here is not to claim one model as king, but rather to evaluate the potential benefits and pitfalls of relying on one model exclusively. My hope being that by sharing these approaches, QA organizations will evaluate their current models and perhaps find room to tune them for greater excellence.

The Just Test Something (JTS) method of costing is when the price for a QA project is determined by external pressure and not by formal requirements for test coverage. Were BIC relied on past precedent, JTS is usually based on time to market, costs or some other business requirement or external force.

Now before you grab your pitchforks and start packing the wood around the stake for my heretical costing model, let me mention that “…whatever we have time for…” was the most common response and feedback I got for the Percentage Coverage article. It was also the most common QA tester, vs. QA managers opinion on how they cost QA. Statements like “we get x weeks to test what we can”

For example, JTS Model Inc, marketing department released a press release that JTS software 1.0 would be GA in 2 weeks time. Final cut-off time for any potential code fixes was however determined in a Go/No go meeting 32 hours before GA. Development, was still however struggling to add the last few change requests added by user requirements team. They expected the next code drop to be ready in 3 days time, but delivered it 5 days later, leaving QA 3.5 work days of testing time as Working extra hours, QA was still finding a significant number of quality issues at the deadline meeting. The CEO, decided however, that GA deadline was of greater concern than any yet undiscovered code issues. As a result, performance, Load and Security testing were ignored and only basic functional tests covering some un-calculated percentage of the application was completed.

Typical of start-ups and immature QA departments, Just Test Something (JTS) is often a result of lack of QA focus and common in organizations that consider QA only as “un”necessary evil. Often bordering on having users do final QA in production. Not to be confused with the recent trend in offering bug bounties, JTS places QA on some scale between “wish we could skip this step /expense” and “I guess we have to say we did SOME LEVEL of QA in the check box”.

JTS takes little focus on number of services, percentage coverage or number of release drops. If fact, it usually has very little planning and is mostly re-active. The process, being “whatever we have time for, get busy”. What is to be tested, and how it will be done being left up to a QA manager or even the individual tester to decide.

Advantages

- Low accountability and plausible deniability, QA can always say it was not given enough time and usually there is a certain amount of acceptance that defects will make it into production.

- Costs are usually tightly controlled and known, its only the outcome (quality) that is estimated. Seldom does issues like percentage coverage, number of services, number of test cases etc need to be described to management.

- Testers focus and time naturally shifts to code frequently used parts of the application or parts that have more defects in the code, since testing structure is less rigid and testing is focussed on highest priority, not total coverage.

- Flexibility for testers to test as and how they see fit, determining their own tools, process and focus is often part of JTS, together with low accountability, is attractive to some QA staff.

- A final Go/No Go meeting is usually part of the SDLC in which more than QA weigh in on if “enough” testing was done. If the level of QA is too low, this meeting can provide a last-minute reprieve.

Disadvantages

- QA role is heavily diminished, lessening their credibility and ability to weigh in and ask for an extension in a Go / No Go meeting. Often little formal gating is done, and code thrown at QA to get it off developments plate resulting in frequent release cycles.

- Lack of process can result in QA’s attention and resources not being evenly distributed, resulting QA testing the most common parts of code multiple times, while ignoring other. The result is uneven coverage of QA and possibly deeply embedded defects that can be missed for many releases.

- Certain steps are generally more frequently sacrificed due to the constraints. For example performance testing or security testing. Eventually these steps fall out of the testing process entirely as it becomes expected that these aspects will be ignored.

- Usually organizations lack of focus on QA results in little training of education spent on developing QA Skills, Process or Tools. The focus if anything on reporting progress. The result further decreases the efficiency of what little QA is being done.

- Poor QA rapidly leads to poor reputation. At some point managements focus shifts to “Fixing Quality” and alternate QA strategies, like outsourcing, off-shoring and restructuring become common place as attempts are made to repair previously missed defects and repair a “week” QA organization.

Conclusion

In reality, any QA department needs to balance time to market and other pressures with QA coverage. As mentioned in the first of this series, these are not static models, but rather companies may use one or more of these models and be positioned on some scale, from slightly applying this model to mostly utilizing this model. QA may wish they have unlimited time and resources at their disposal to do 100% test coverage, but this is rarely the case. What defines JTS model is that QA coverage is determined by the pressure placed on it and not the need to, due any particular level of due diligence.

So put away your Pitchfork, and add a comment below if you wish to add or detract from this post.