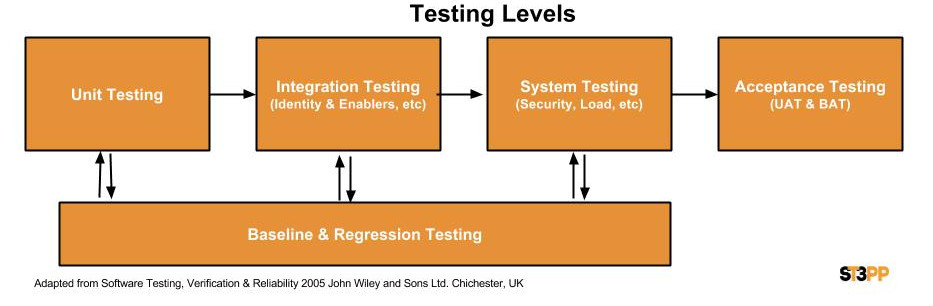

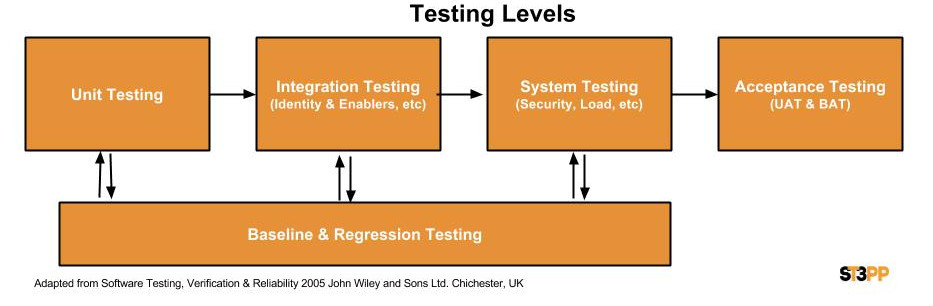

Following on from SDLC strategies, the second group or area to focus on when attempting to reduce the total amount of testing is ways to reduce the number or Test Iterations or levels.

Every organization has their own st3pps in their SDLC and organization structure. This often depends on the defined roles and functions in the organization and their development approach. A large waterfall based organization may have dedicated security, identity and load testing teams, while a smaller agile organization may have these functions fall under a single team or person. In every organization, there is a struggle between finding the most efficient process vs budget, personal agendas and overcoming inertia. “Its always being that way”, and “its done by a different team” does not imply optimized.

Unit Testing

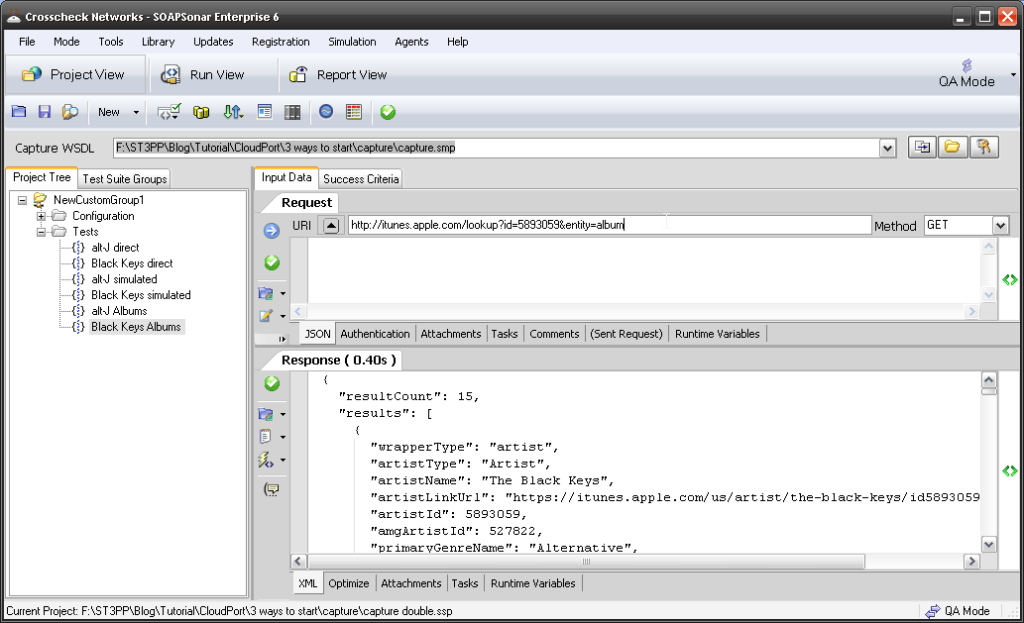

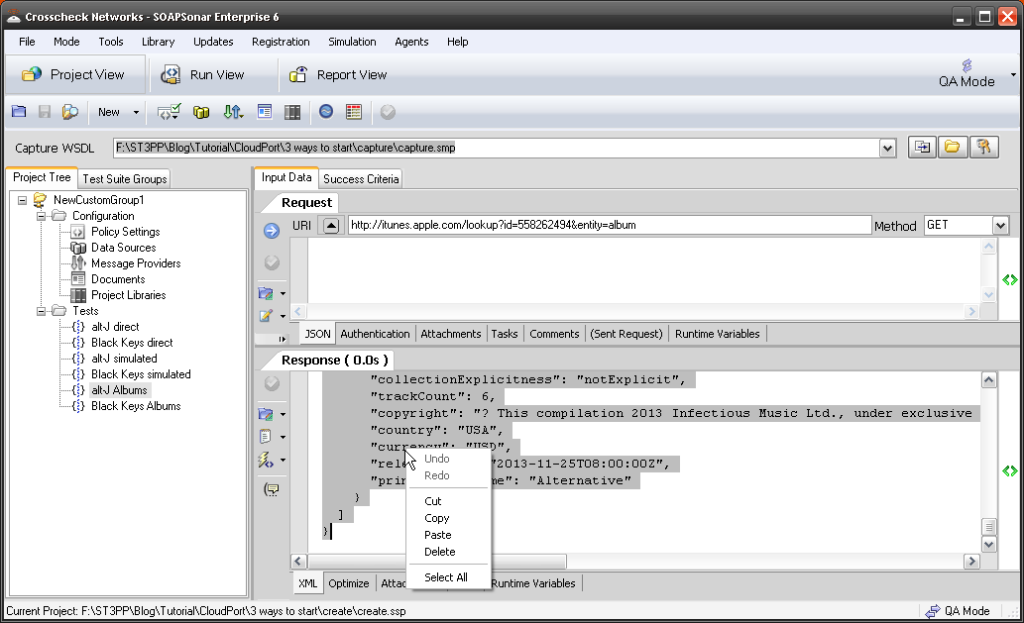

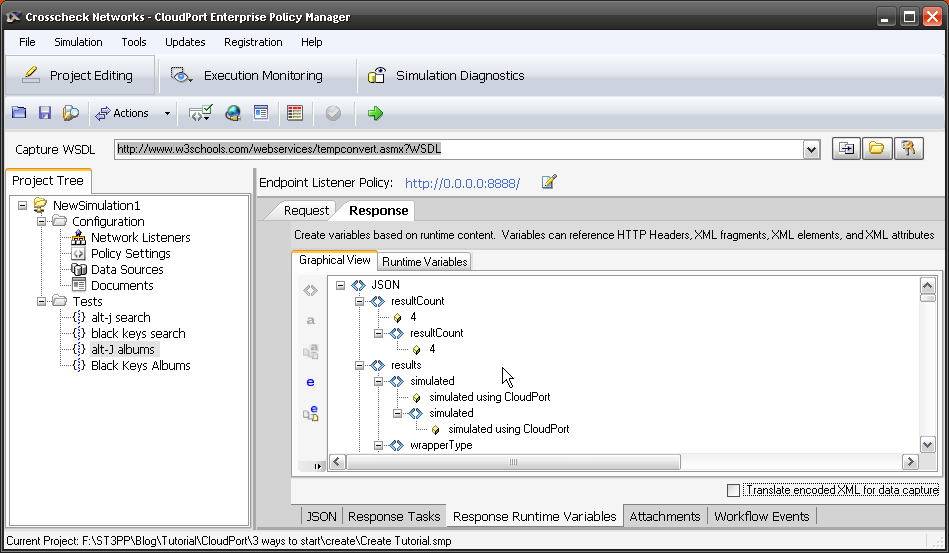

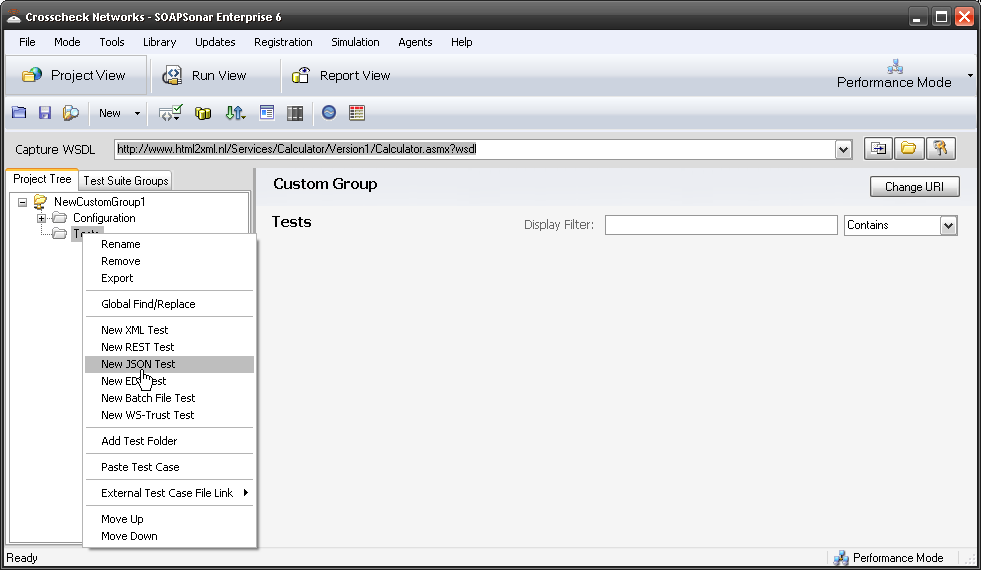

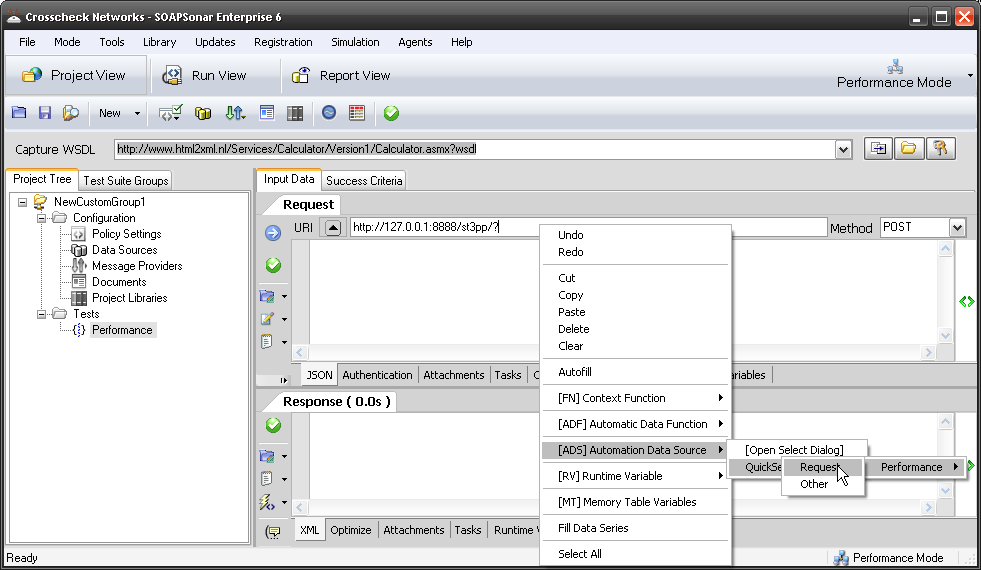

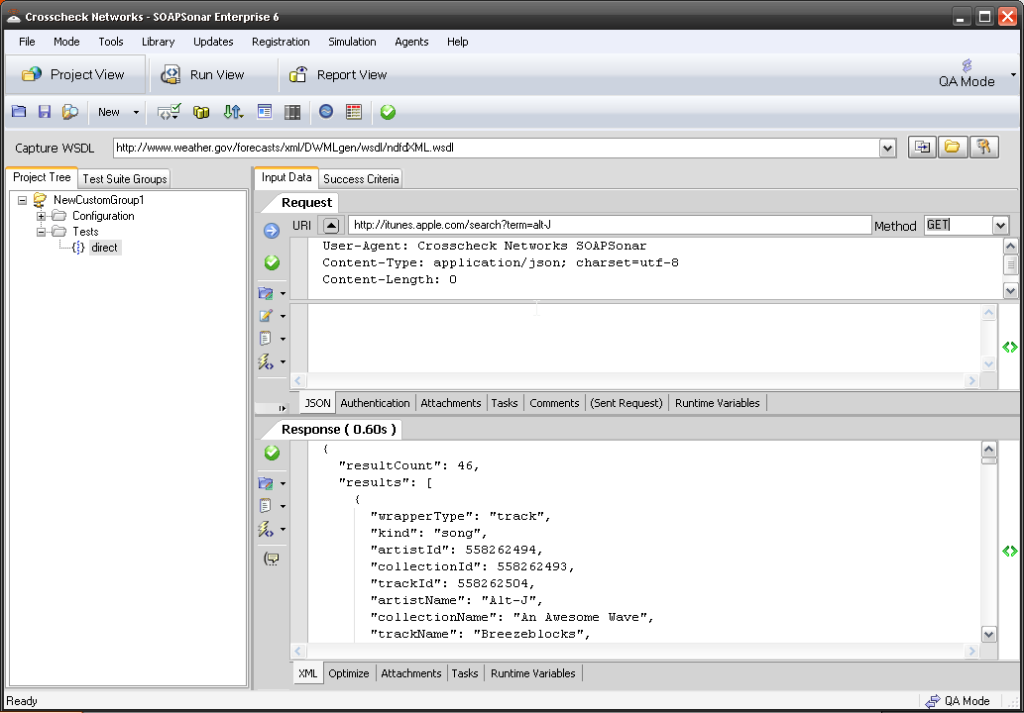

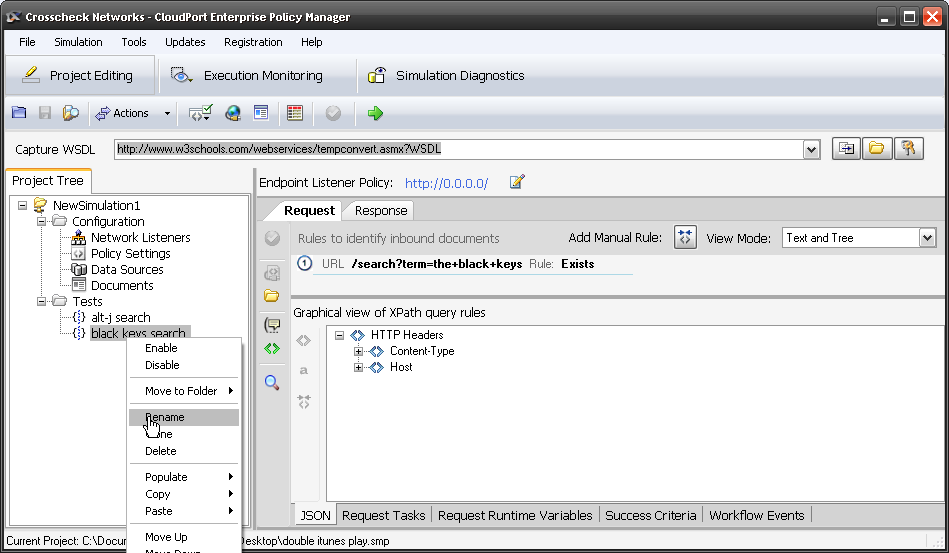

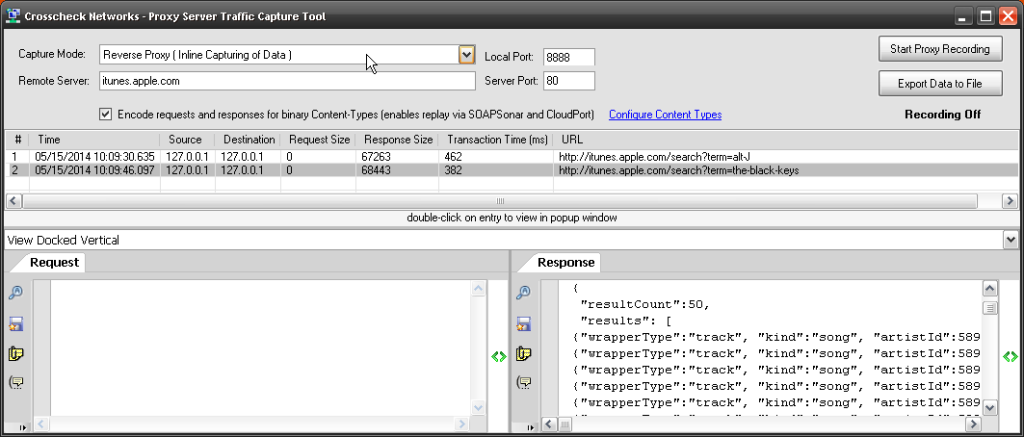

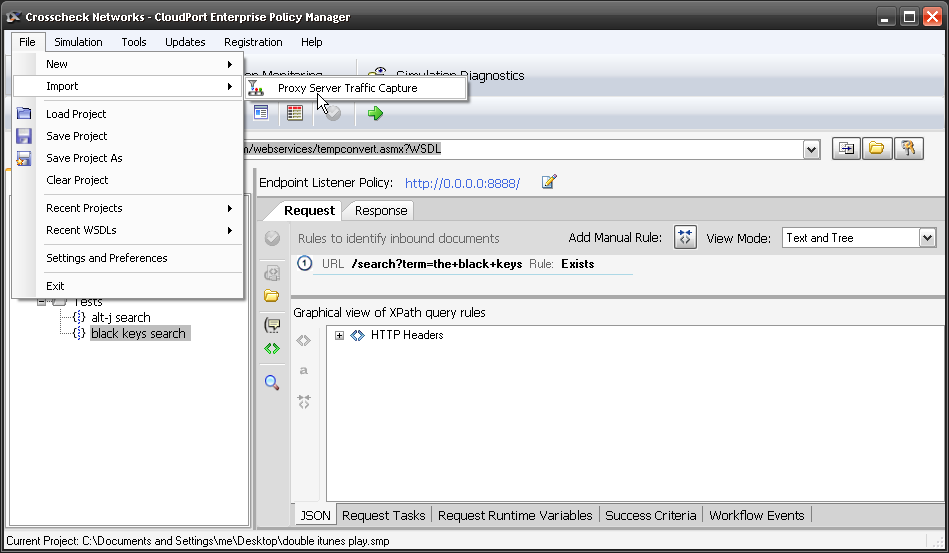

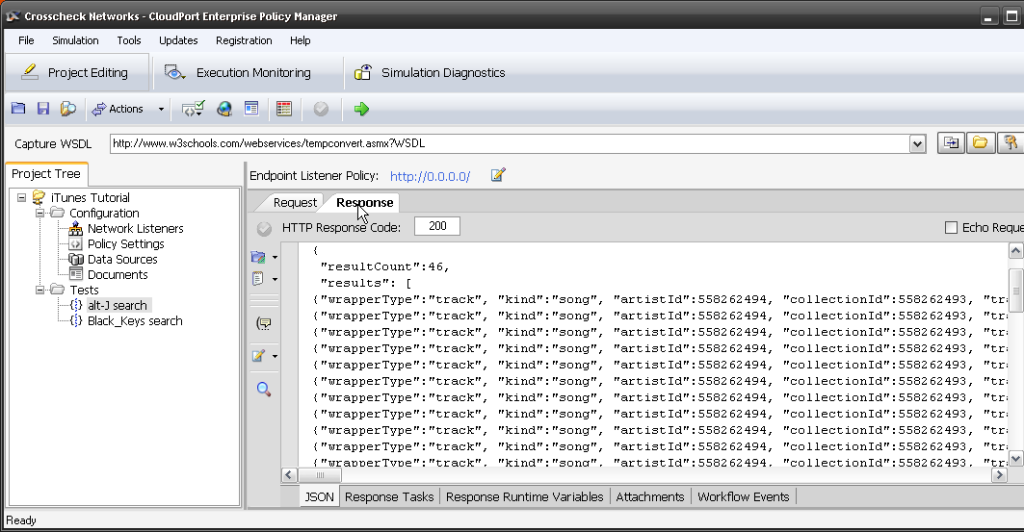

With JSON services not having WSDL or Schema, many organizations have had to find new ways for Development and Testing to work together to ensure the correct coverage. Developers and testers may work off the same service description document, but how accurate is the document and how well does it match the developed services? What if a developer added or missed some functionality when coding? How does Testing even know what services are there for testing? Group development like Agile or Extreme programming may help, yet requires testers to have a fair understanding of JSON.

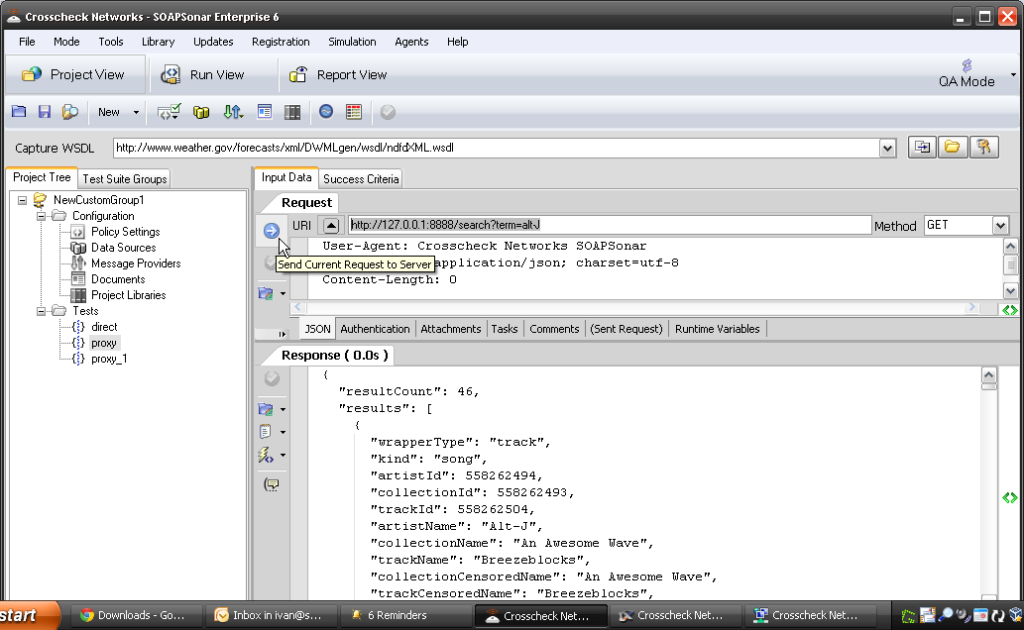

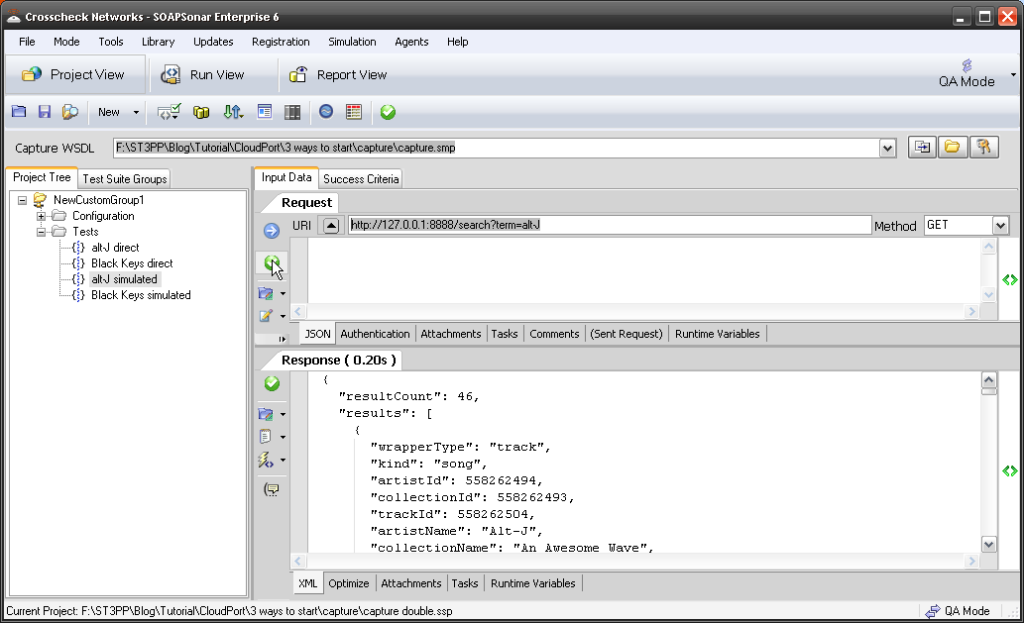

A second challenge is how do you gate or ensure that developers have done at least some level of due diligence before passing the code drop over to Testing to begin testing. If the defect density is to high, and code needs to be heavily reworked, the entire test cycle is wasted. If previously found issues are not suitably addressed, the same retesting is again a waste. Release management can take significant amount of time and resources. To prevent this, various organizations have tried different approaches. From formal sign-off’s, to change documentation or release management and change management tools. Another approach, is to have one team develop the basic unit test, and this test be used as the gating process. Testing then takes these unit testes and expands them out to include far more intensive coverage. In SOAP its most often the testers that develop the unit test, but with JSON, it is now sometimes the developers that develop the unit test together with the service. Sharing tools and test cases only makes sense, as the developers require some way to test the code they developing in any case. Handing it to testing to expand is good practice, to ensure that “fresh eyes” are used. But what if a developer adds code not in documentation and does not hand a test case over either? Is this risk acceptable?

Whatever the approach, the objective remains the same, from release 0.1 to GA, how can one reduce the number of test cases. The concept also remains the same – Shift as much Testing as possible left, or earlier in the SDLC right to testing while developing and making sure that “code drops” have a certain amount of maturity.

Integration Testing

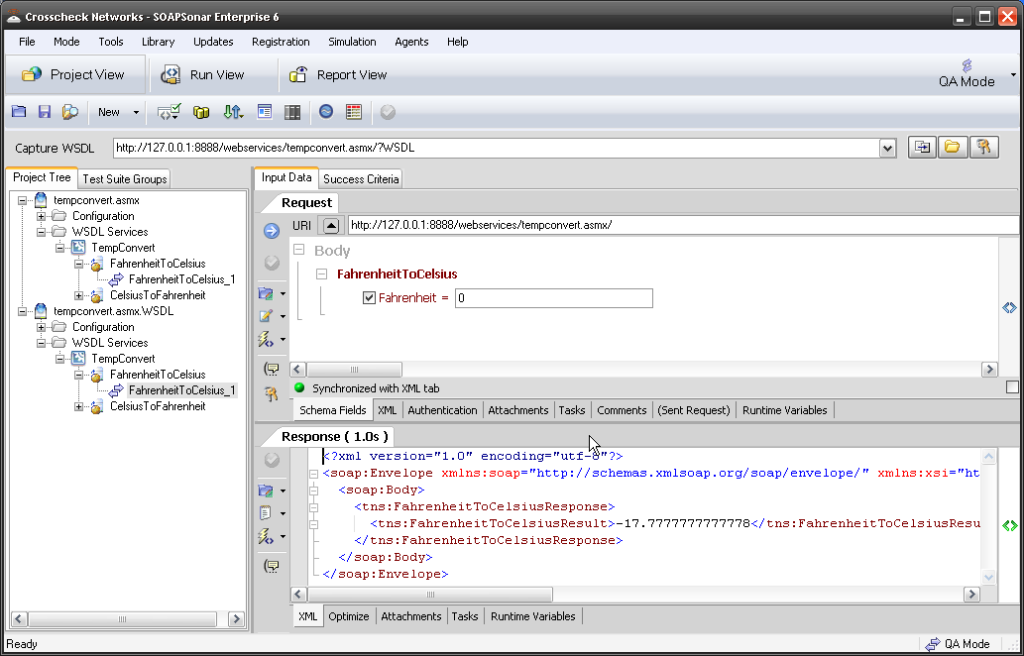

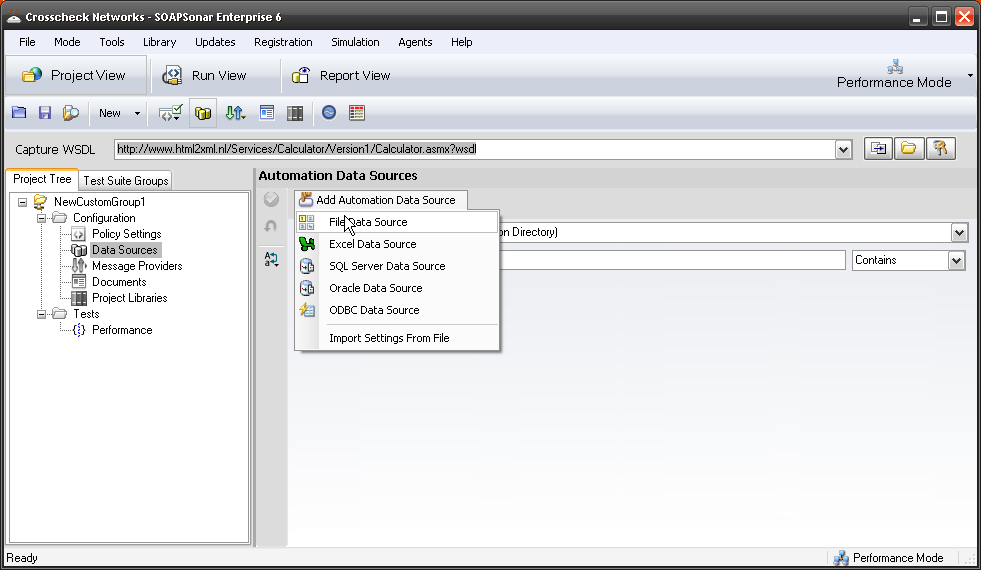

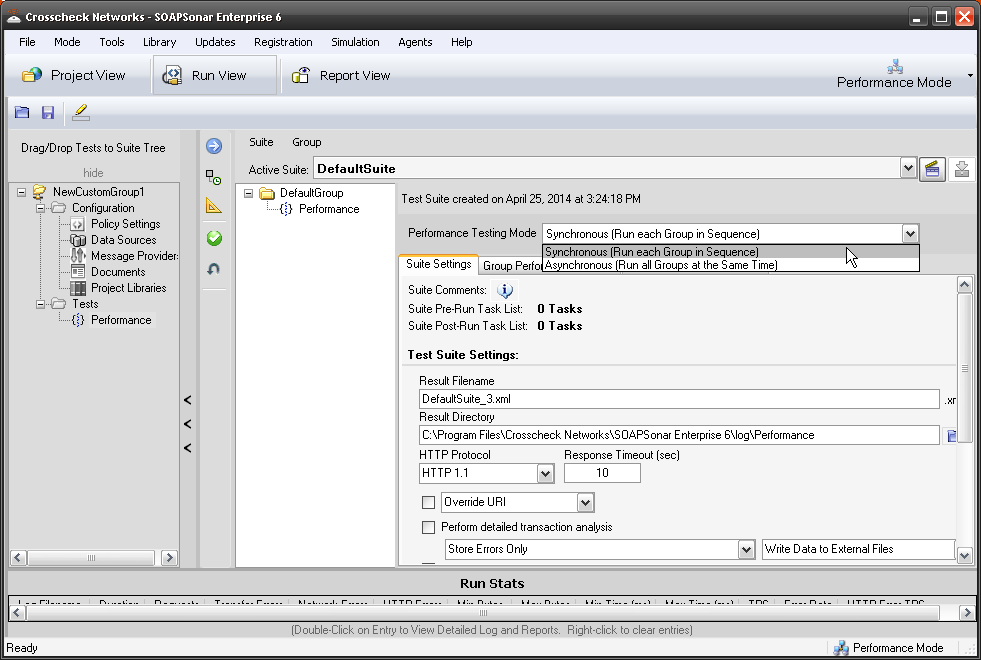

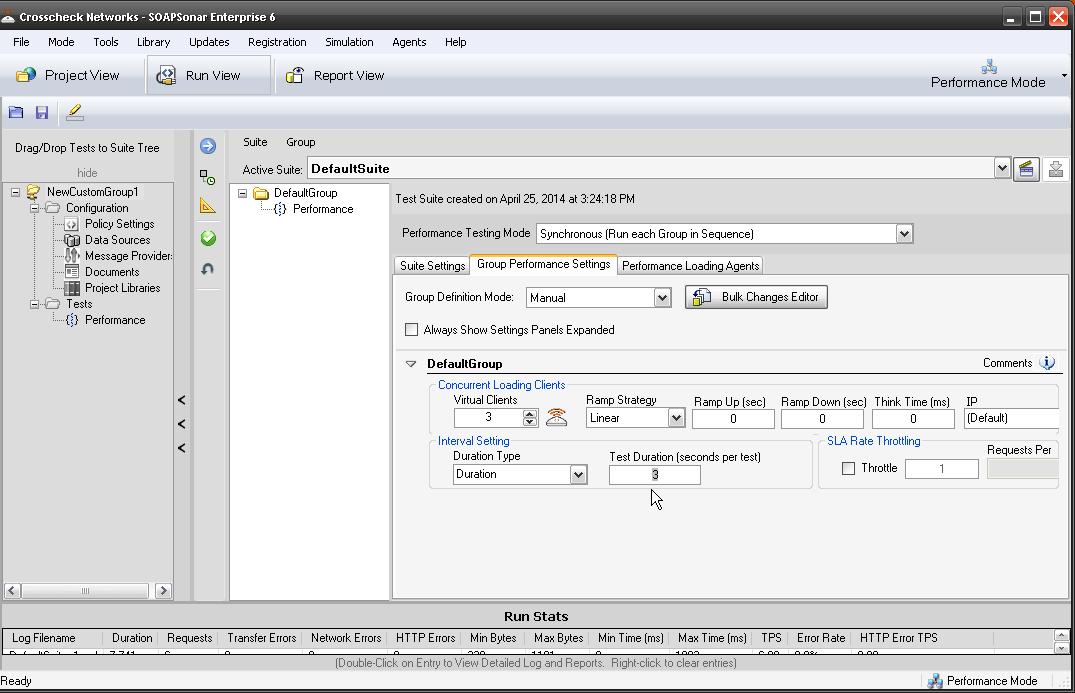

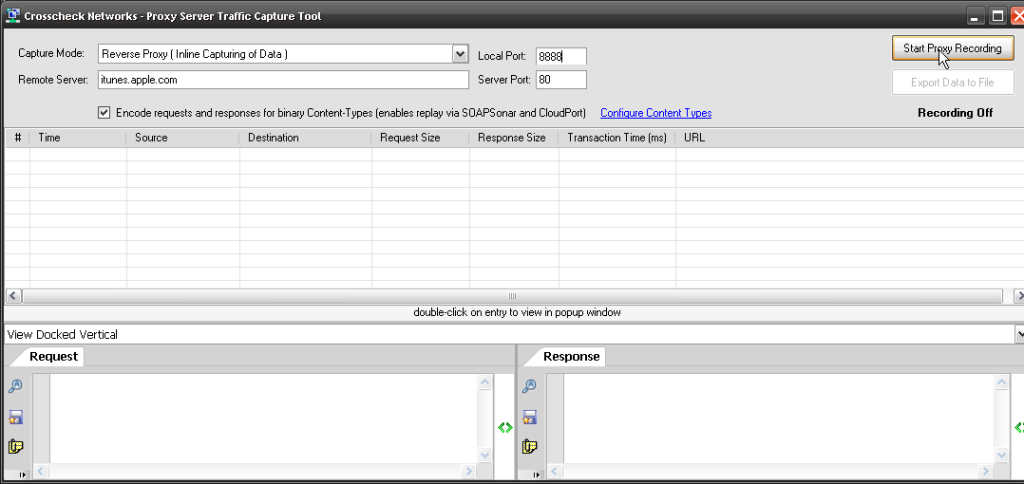

Integration testing of old was usually a different team to that of Unit testing. As per the post on Continuous Testing in Agile, if using and automation tool, the test cases should be the same. Integration testing, should just include expanding the test cases developed in Unit Testing to include things like encryption, cookies, identity, performance of each service (and enablers) etc. These individual test cases then linked for automation in what we call chaining. Integration testing often then be done concurrently with unit testing, much earlier in the SDLC.

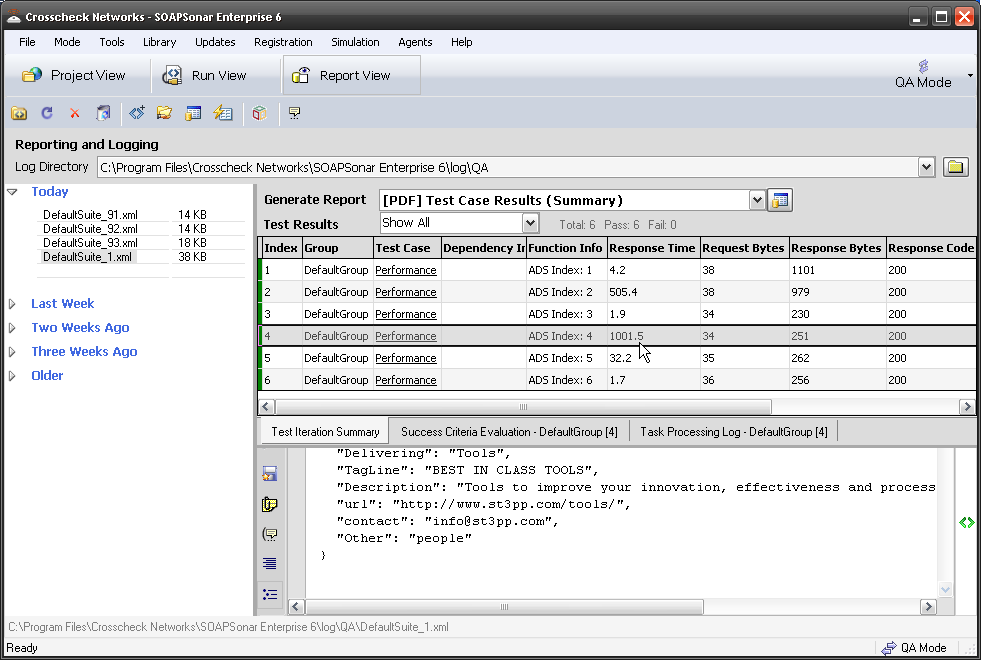

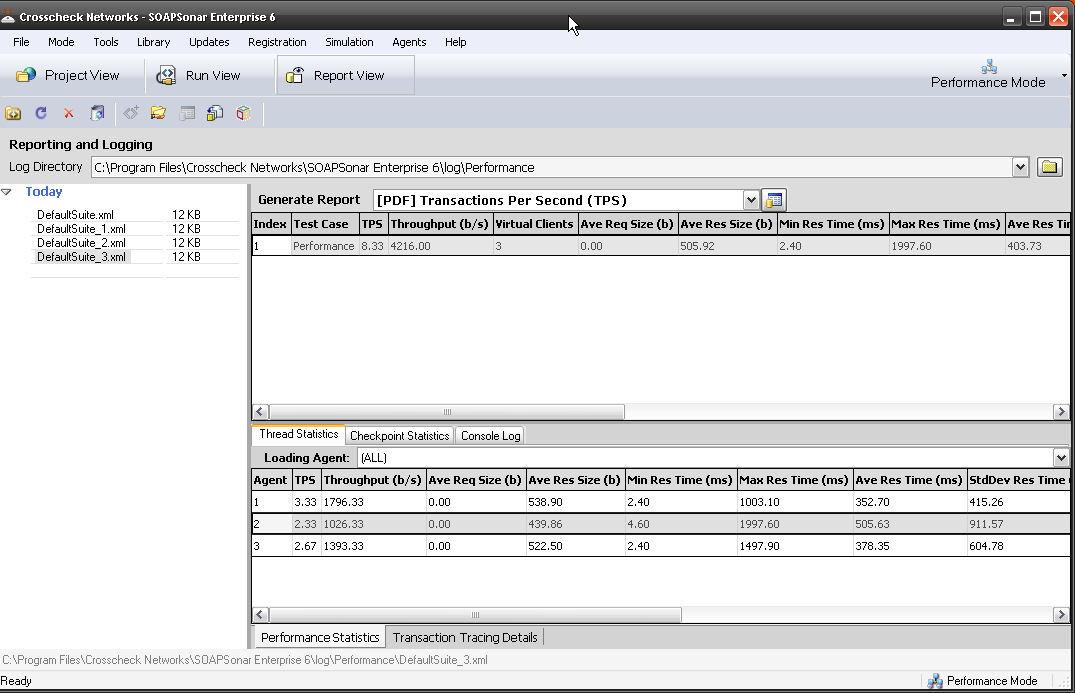

Using the right automation integrated tool, that supports encryption, identity, performance and as many of your integration tests in one, enables the elimination of distinct test cycles for each of the Integration tests. SOAPSonar’s ability to do functional, security, performance and load testing using the same test case, is the number one reason customers say they selected it. That said, Performance of a individual service is something I recommend be part of a unit test and a functional requirement.

Consolidating integration tests into as few as possible and shifting as much as possible into functional unit test cycle can greatly reduce the number of iterations. The impact of removing a single test iteration can clearly be seen in service plan costing model. This is not about increasing risk by skipping systems tests..

Systems Testing

Systems testing requires a certain amount of code to be developed and tested, and the environment to be built. Moving it too early in the SDLC can actually add to the testing needs. On the other hand, if the integration and unit tests are all automated, and completed successfully, systems testing becomes more about the environment and less about code. Finding code quality issues at this point can be very expensive and troublesome to fix in time.

Again if all the unit tests, and integration tests are automated in the same test case, and if the tool offers the ability to do systems test eg geographically dispersed load testing, the effort required during this iteration can be greatly reduced. A note on performance vs load testing. Too many times I hear that performance testing is left till Systems Testing and suddenly its discovered days before release that there are performance issues. How a service performs should be tested far earlier. At this stage, Load testing should be establishing that the environment (servers, network and other infrastructure) is suitable and not a individual services or enablers performance.

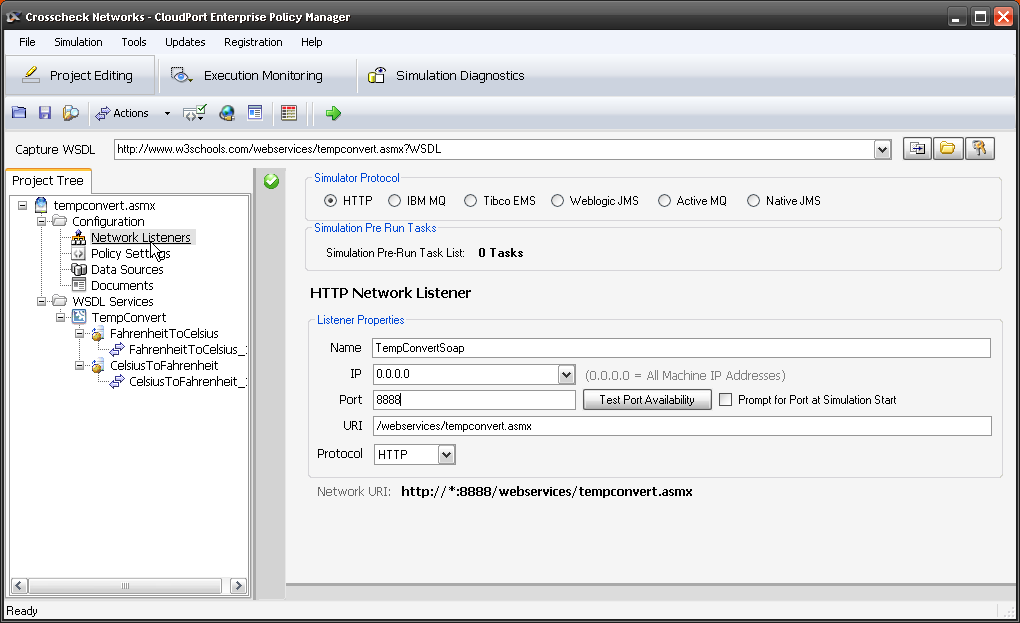

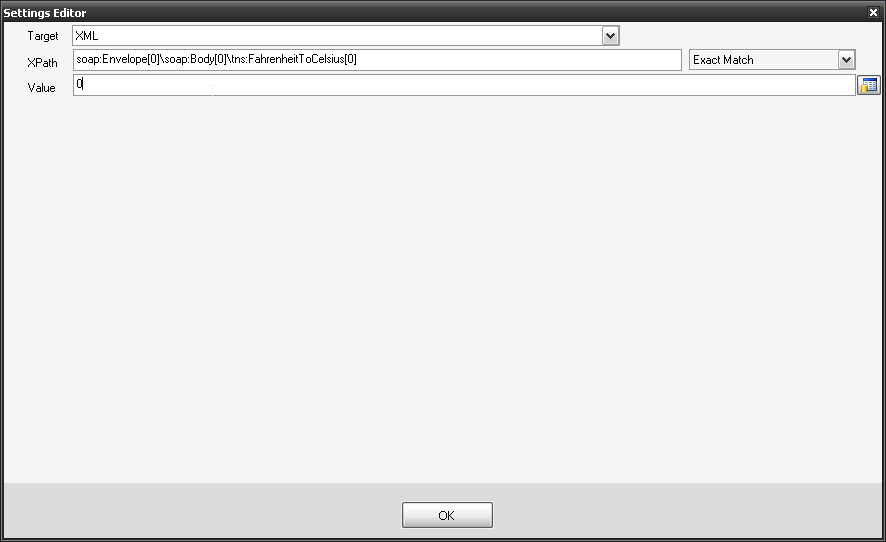

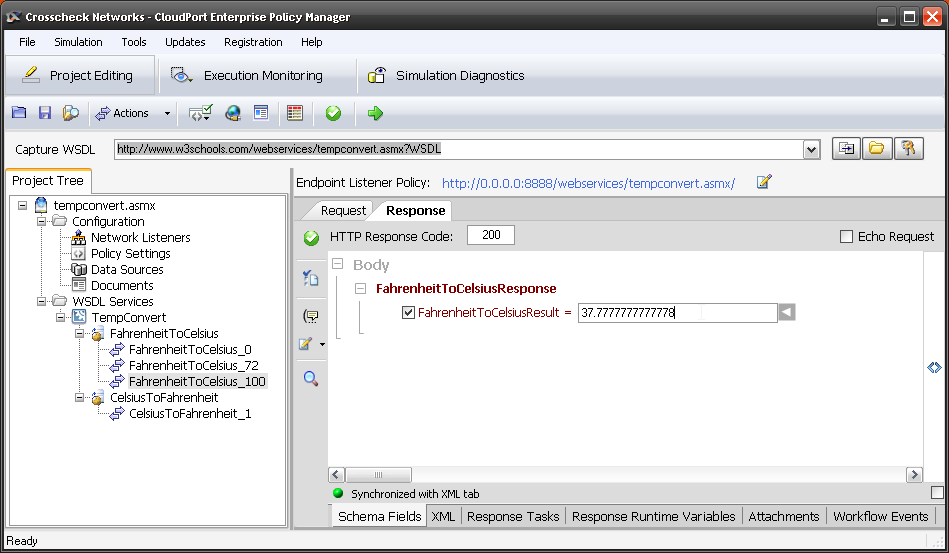

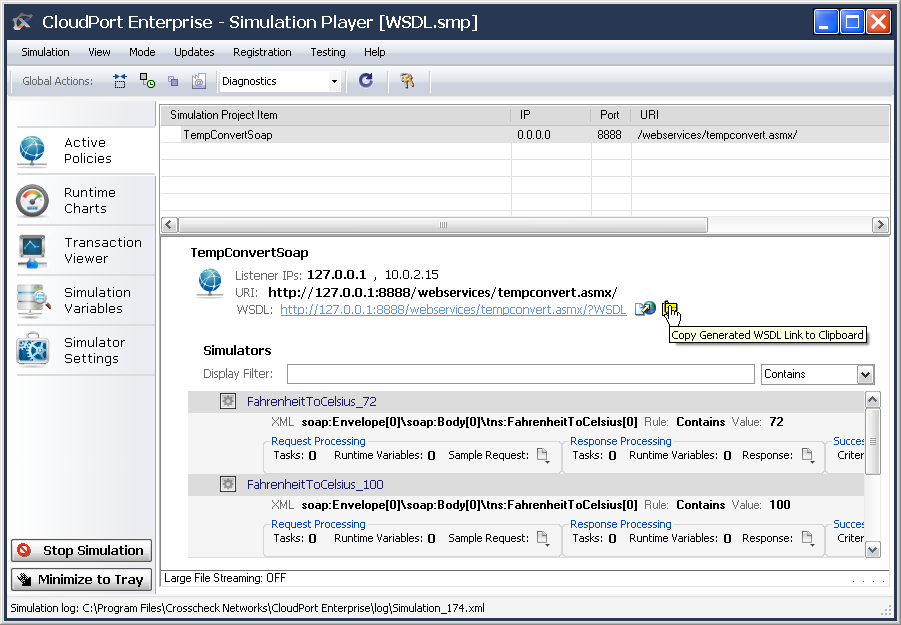

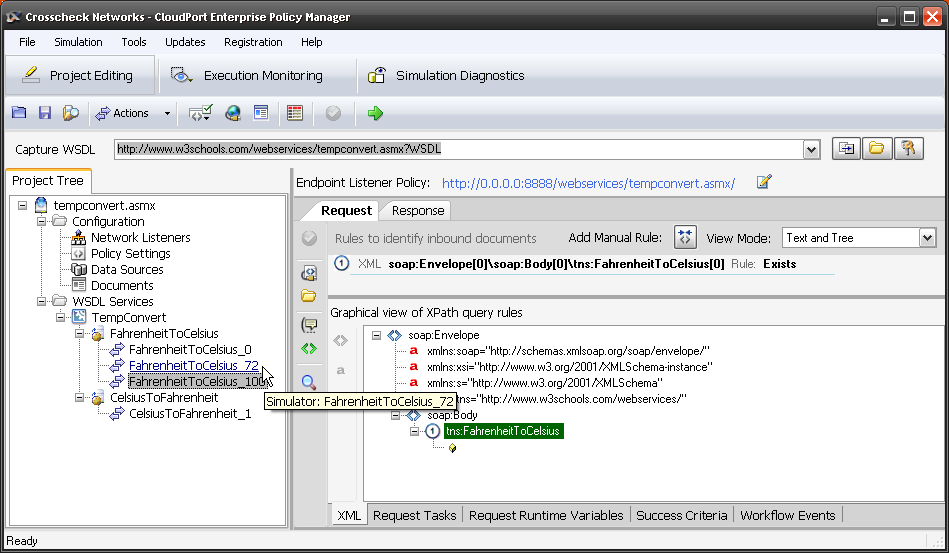

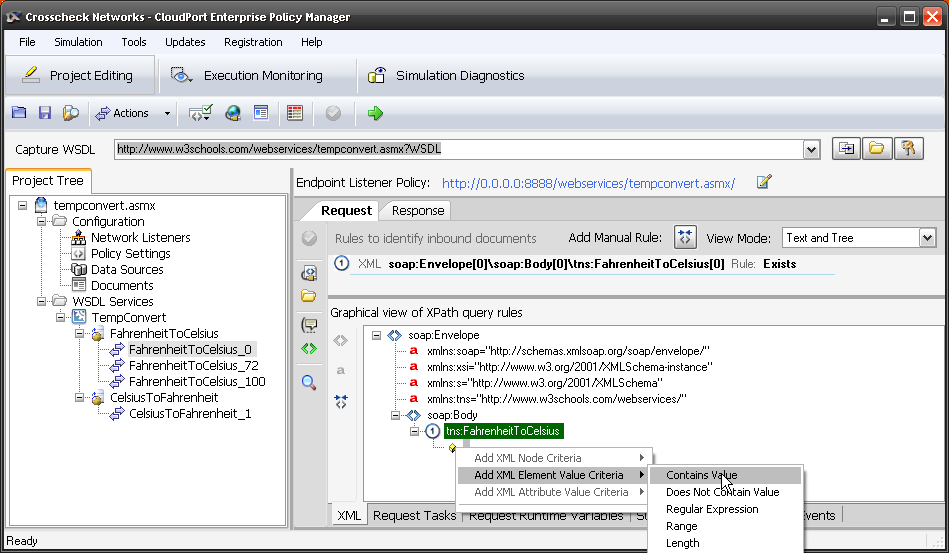

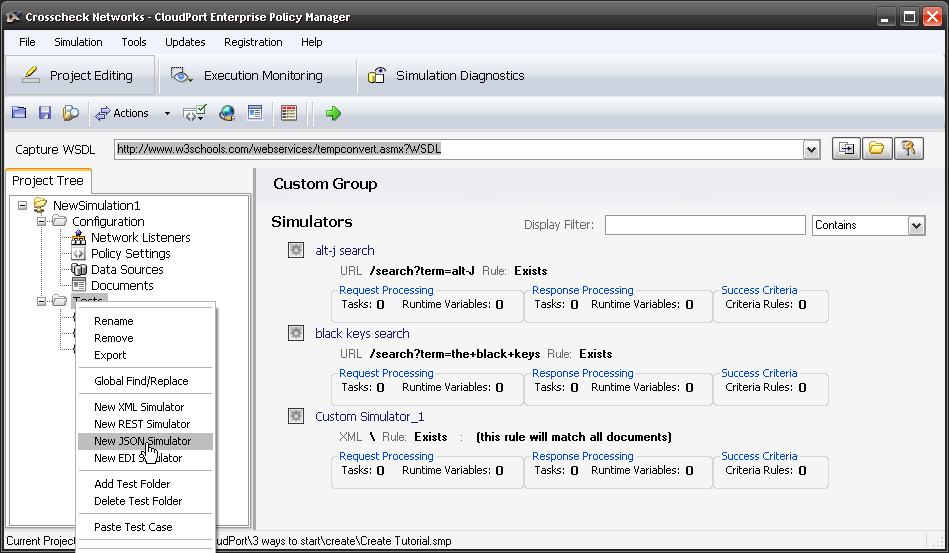

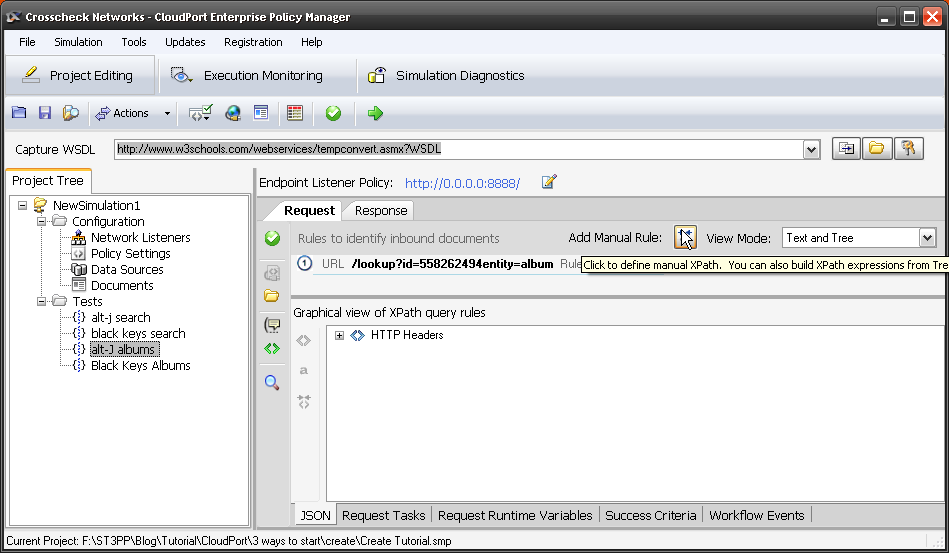

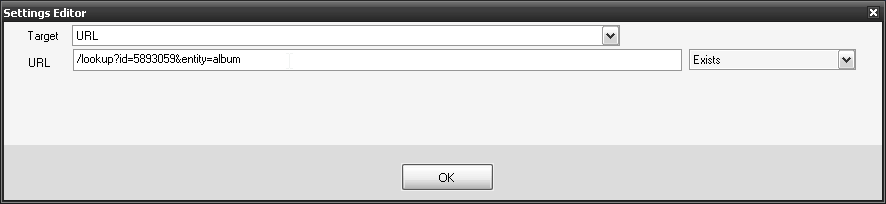

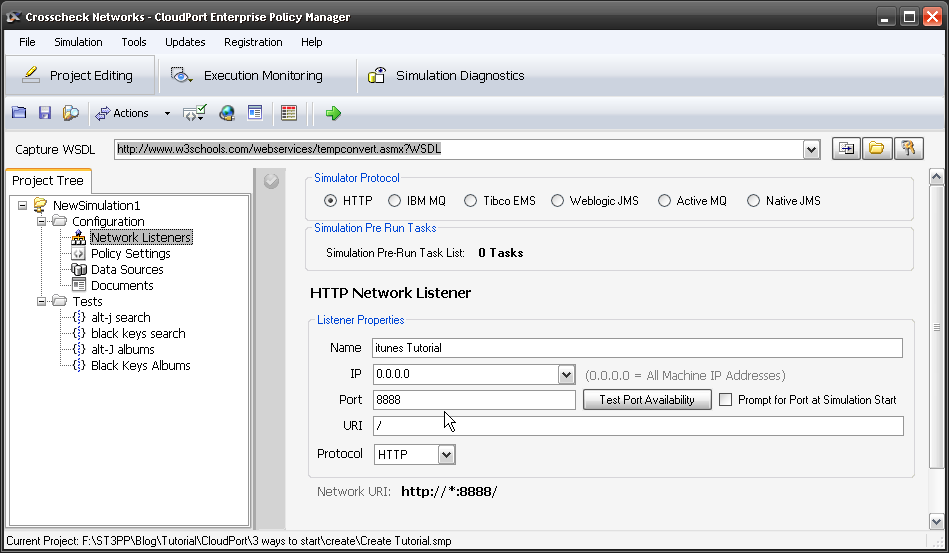

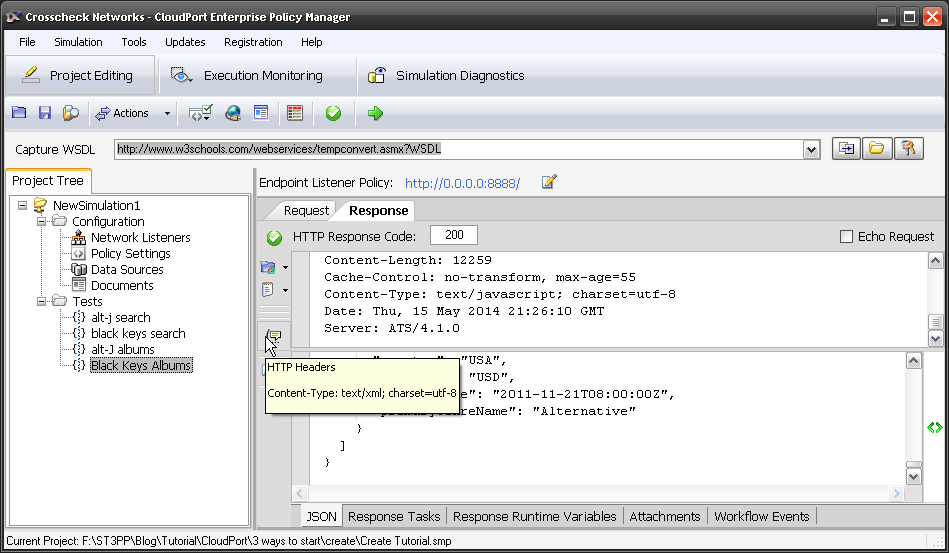

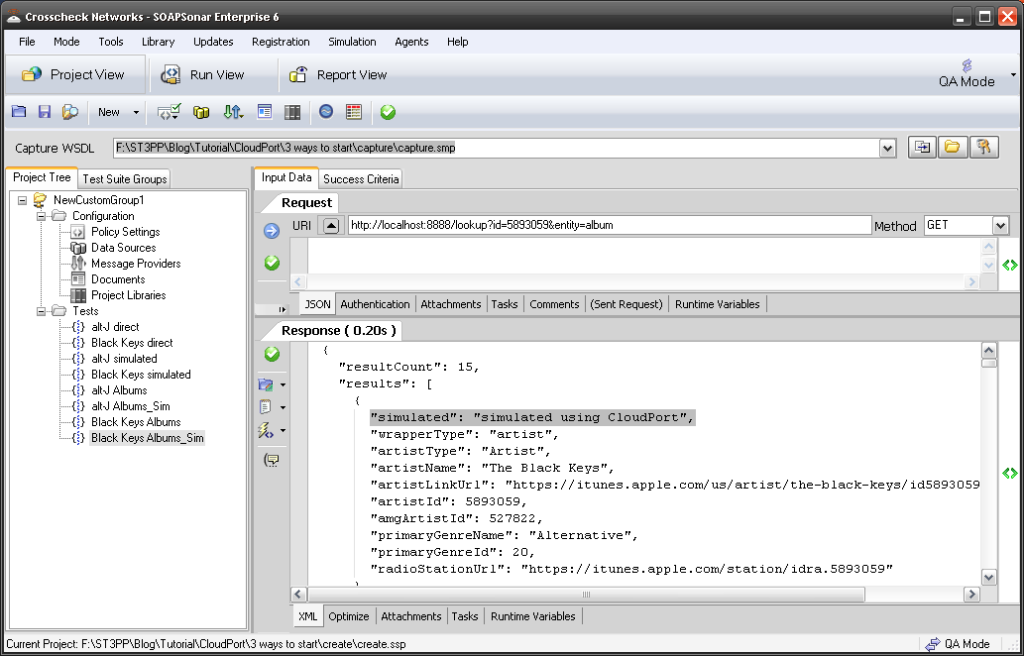

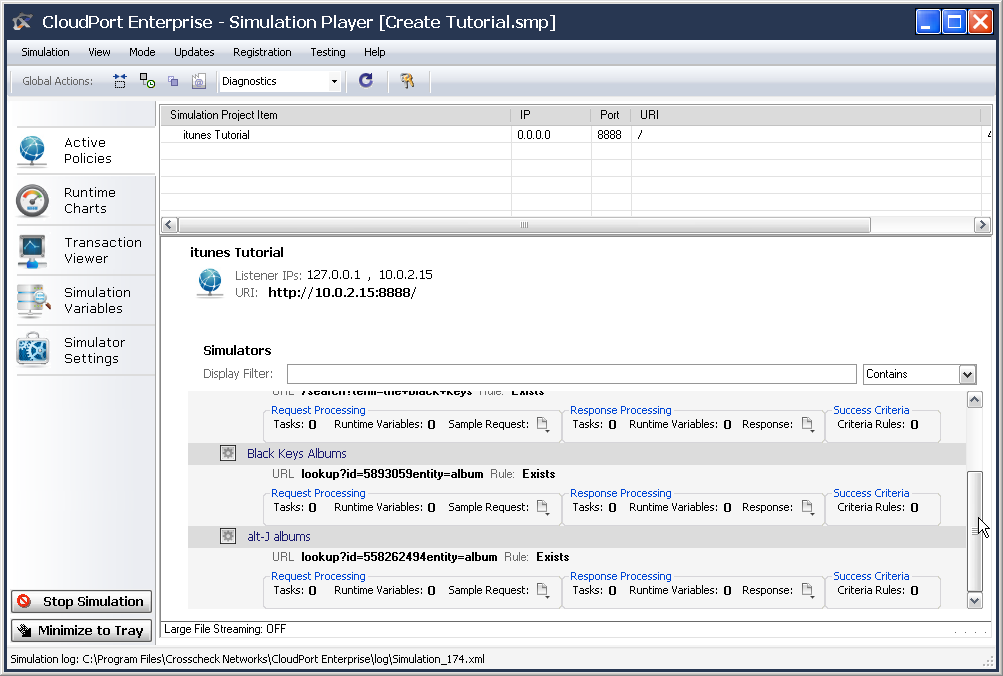

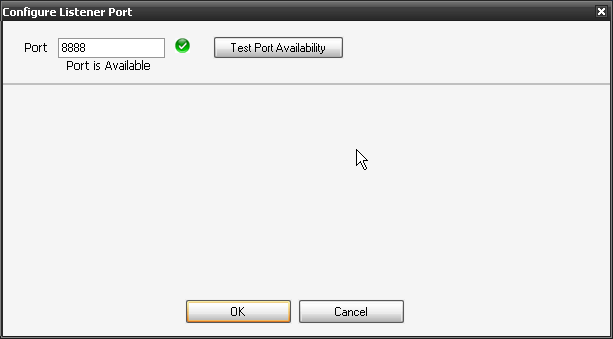

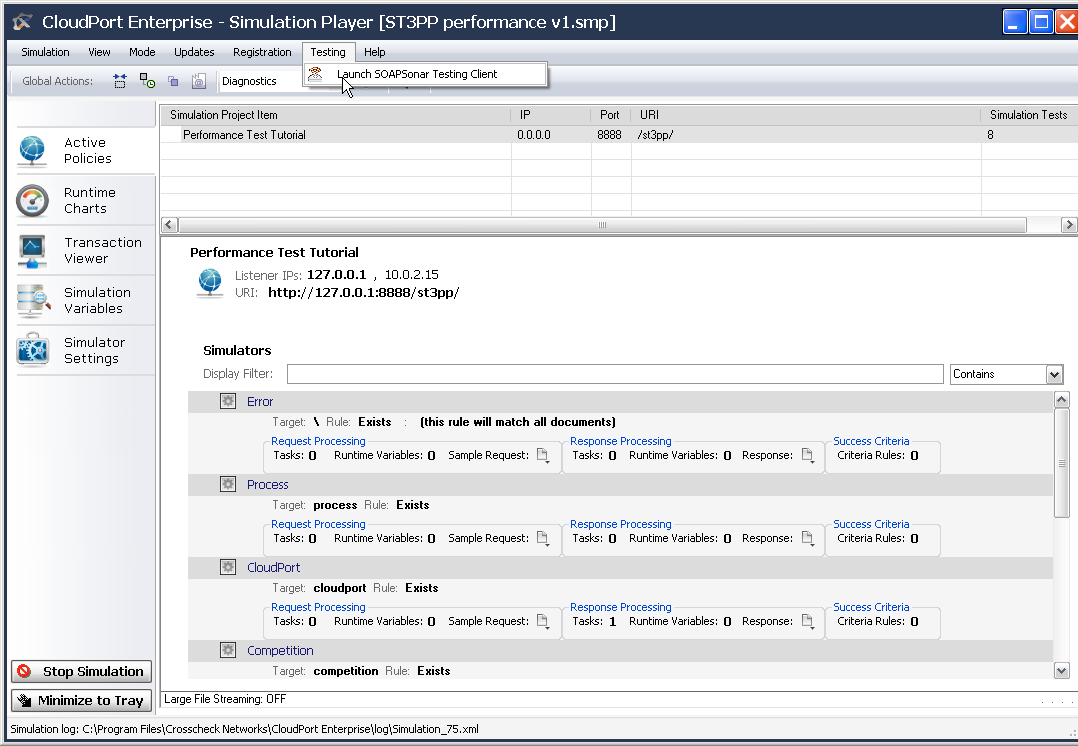

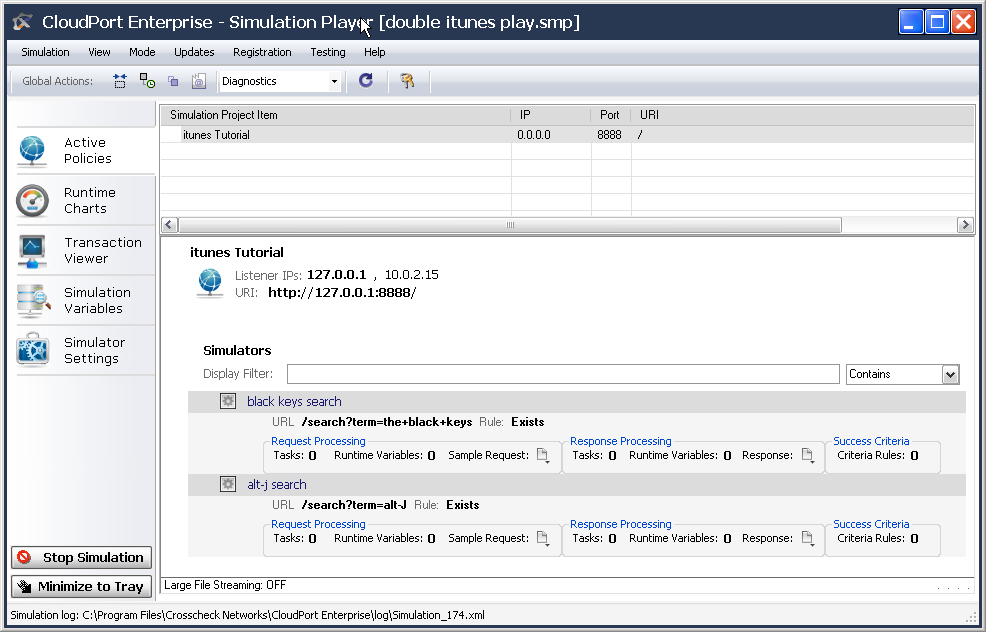

Using Virtualization or Simulated Services to stand in for services either unavailable or that cannot be load tested can be very useful in enabling earlier Systems testing. Simulated services are key trouble shooting tool to eliminate environmental factors like network, cloud, data integrity and servers.

ACCEPTANCE Testing

Acceptance testing is were you suddenly discover if all the work was correctly focussed. Have your Developers and Testers spent all this time developing and testing the right requirements? NIST lists business requirements as the number 1 issue in software development. Often the users and sponsors are not technically minded and extracting the requirements can be very difficult. Bringing Acceptance Testing in earlier to review what is being done can greatly decrease time and effort spent in incorrect requirements delivery.

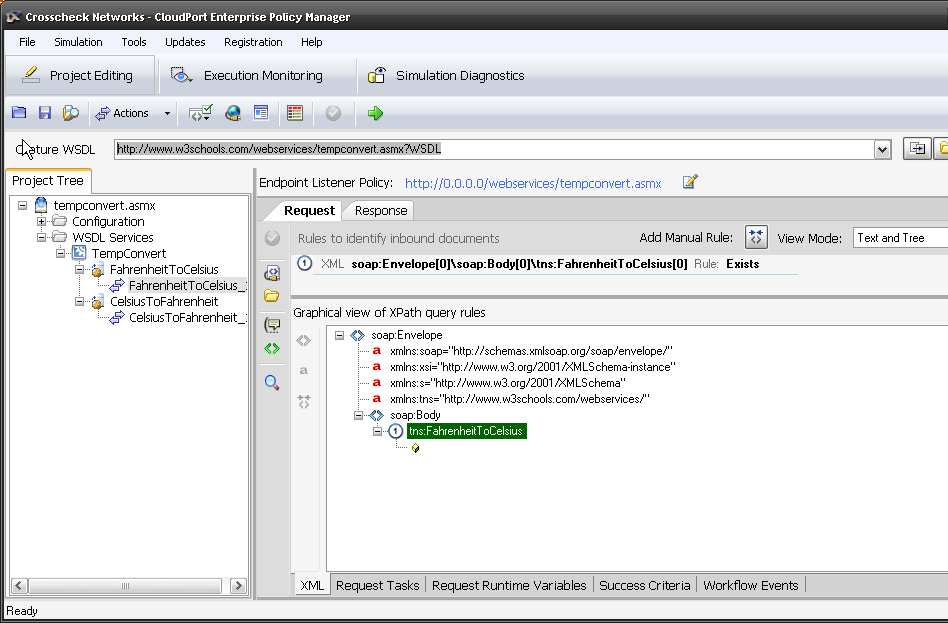

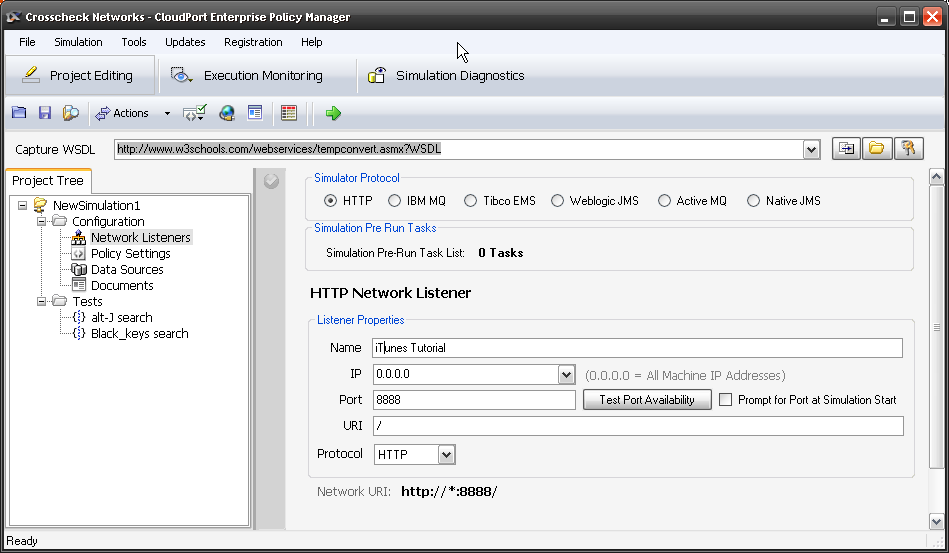

In web services, acceptance testing is usually heavily weighted on the client side usability and visuals. The need to see an example and not just a jpeg. This is another benefit of Simulated services. By creating a basic simulated service earlier in the SDLC, the GUI team can begin work. This GUI can then be used together with the simulated services for earlier acceptance testing and to compare to the end services built.

Regression

Regression testing between releases is an important way to minimize the testing scope. If a regression test of a service shows no code change between release 0.4 and 0.5 then those services were not changed since the last test cycle and need not be tested again. lets say a regression test says 30% of the code was had no changes, using the service plan costing model, how much testing could that save? That said, if they automated, it may be of little benefit not “pushing that button” and retesting them.

Where regression testing really shines is post launch. The maintenance phase of software often costing far more than the development phase. Yet if your automation test cases developed during the development cycle can also be used for regression testing during the maintenance cycle, the effort is greatly reduced. This “continual testing” post production and even API monitoring is rapidly growing area of focus for many organizations as more complexed meshed applications are being developed.

A note here, so often I hear that security or some other team wont allow for automation. The impact however of a single systems test iteration not being supported can cause any automation to be useless on production environment and prevent any regression testing using the previously built test cases.

Conclusion

There is a lot about automation in this post. Every day I hear of benefits and issues with automation. Many organizations try apply automation to their old process and find little benefit and become discouraged. Alternately, a tester involved in load, security or some other single test iteration, seek a tool to do just that iteration – sometimes just for control of their environment, breaking the larger benfit by doing so. The key to successful automation is and end to end approach from the start of the SDLC through. You may choose not to automate the whole process, or use other tools, but the design and application of automation needs to be done with the entire cycle in mind.

The next post will be on ways to reduce the number of unit tests.